C++ Web Scraping: A Comprehensive Guide for Developers

Web scraping is the automated process of extracting data from websites. It’s a powerful technique used for various purposes, including market research, data analysis, and content aggregation. While many programming languages can be used for web scraping, C++ offers a unique blend of performance and control, making it a viable option for specific scenarios. This guide provides a comprehensive overview of C++ web scraping, covering essential libraries, techniques, and best practices.

Why Choose C++ for Web Scraping?

While languages like Python with libraries like Beautiful Soup and Scrapy are more commonly associated with web scraping, C++ provides several advantages, particularly when performance and resource management are critical:

- Performance: C++ is a compiled language known for its speed and efficiency. This can be crucial when dealing with large-scale web scraping projects that require processing vast amounts of data quickly.

- Resource Control: C++ allows for fine-grained control over memory management and system resources. This is beneficial when scraping from resource-constrained environments or when optimizing for minimal memory footprint.

- Integration with Existing Systems: If your existing infrastructure or data processing pipelines are already built using C++, integrating C++ web scraping tools can be more seamless and efficient than introducing a new language dependency.

Essential C++ Libraries for Web Scraping

Several C++ libraries can be used to build web scrapers. Here are some of the most popular and effective options:

libcurl

libcurl is a versatile and widely used library for transferring data with URLs. It supports various protocols, including HTTP, HTTPS, FTP, and more, making it ideal for fetching web pages. It is a low-level library, offering significant control but requiring more manual handling of network requests and responses.

Example:

#include <iostream>

#include <curl/curl.h>

size_t write_data(void *ptr, size_t size, size_t nmemb, std::string *data)

{

data->append((char*) ptr, size * nmemb);

return size * nmemb;

}

int main()

{

CURL *curl;

CURLcode res;

std::string readBuffer;

curl_global_init(CURL_GLOBAL_DEFAULT);

curl = curl_easy_init();

if(curl) {

curl_easy_setopt(curl, CURLOPT_URL, "http://example.com");

curl_easy_setopt(curl, CURLOPT_WRITEFUNCTION, write_data);

curl_easy_setopt(curl, CURLOPT_WRITEDATA, &readBuffer);

res = curl_easy_perform(curl);

if(res != CURLE_OK)

std::cerr << "curl_easy_perform() failed: " << curl_easy_strerror(res) << std::endl;

else

std::cout << readBuffer << std::endl;

curl_easy_cleanup(curl);

}

curl_global_cleanup();

return 0;

}

Gumbo Parser

The Gumbo Parser is an HTML5 parsing library written in C. It’s designed to be robust and efficient, making it well-suited for parsing the HTML content retrieved by libcurl or other HTTP clients. It produces a parse tree that can be easily traversed to extract specific data elements.

Example:

#include <iostream>

#include <string>

#include <gumbo.h>

void search_for_links(GumboNode* node) {

if (node->type == GUMBO_NODE_ELEMENT) {

GumboAttribute* href;

if (node->v.element.tag == GUMBO_TAG_A &&

(href = gumbo_get_attribute(&node->v.element.attributes, "href"))) {

std::cout <value <v.element.children;

for (unsigned int i = 0; i < children->length; ++i) {

search_for_links((GumboNode*) children->data[i]);

}

}

}

int main() {

std::string html_input = "<html><body><a href='http://example.com'>Example</a></body></html>";

GumboOutput* output = gumbo_parse(html_input.c_str());

search_for_links(output->root);

gumbo_destroy_output(&kGumboDefaultOptions, output);

}

cpp-netlib

cpp-netlib is a collection of C++ libraries for network programming. It includes an HTTP client that can be used to fetch web pages. While it’s a more comprehensive library than libcurl, it can be more complex to set up and use for simple web scraping tasks.

Web Scraping Techniques in C++

Once you have chosen your libraries, you can implement various web scraping techniques:

Basic HTML Parsing

This involves downloading the HTML content of a web page and parsing it to extract specific data elements using libraries like Gumbo Parser. You’ll need to identify the HTML tags and attributes that contain the desired data and then write code to traverse the parse tree and extract those elements.

Using CSS Selectors

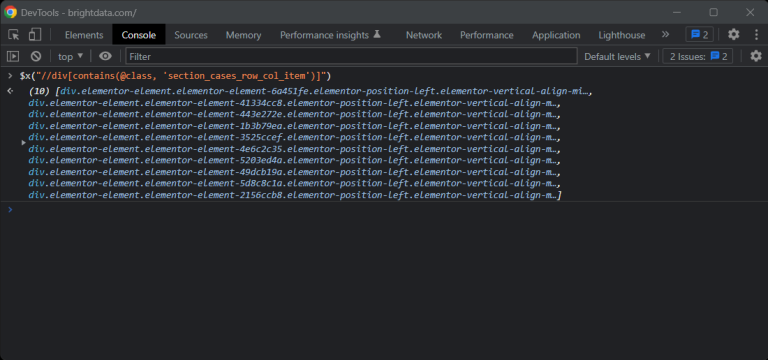

CSS selectors provide a powerful and flexible way to target specific elements within an HTML document. Some C++ libraries offer CSS selector support, allowing you to extract data based on CSS rules. This can be more efficient and maintainable than manually traversing the parse tree.

Handling Dynamic Content (JavaScript)

Many modern websites rely heavily on JavaScript to dynamically generate content. C++ web scraping alone cannot directly execute JavaScript. To scrape dynamic content, you’ll need to integrate with a headless browser like Selenium or Puppeteer. This involves controlling a browser instance programmatically to render the page and then extracting the rendered HTML using C++.

Dealing with Pagination

When scraping data from multiple pages, you’ll need to handle pagination. This involves identifying the URLs for subsequent pages and iterating through them to extract data from each page. You’ll need to parse the HTML to find the links to the next pages. Consider using a loop and carefully managing the number of requests to avoid overloading the server.

Best Practices for C++ Web Scraping

To ensure your C++ web scraping project is successful and ethical, consider these best practices:

- Respect `robots.txt`: Always check the `robots.txt` file of the website you are scraping to understand which parts of the site are disallowed for bots.

- Implement Rate Limiting: Avoid overwhelming the server with too many requests in a short period. Implement delays between requests to mimic human browsing behavior.

- Use User-Agent Headers: Set a descriptive User-Agent header to identify your scraper and allow the website administrator to contact you if necessary.

- Handle Errors Gracefully: Implement error handling to gracefully handle network errors, timeouts, and unexpected HTML structures.

- Store Data Efficiently: Choose an appropriate data storage format (e.g., CSV, JSON, database) based on the volume and structure of the data you are extracting.

- Monitor Your Scraper: Regularly monitor your scraper’s performance and error logs to identify and address any issues.

- Consider the Legal Implications: Be aware of the terms of service and copyright restrictions of the website you are scraping. [See also: Copyright Law and Web Scraping]

Advanced C++ Web Scraping Techniques

Beyond the basics, more advanced techniques can enhance your C++ web scraping capabilities:

Proxy Rotation

To avoid being blocked by websites, use a proxy rotation strategy. This involves using a pool of proxy servers and rotating them periodically to mask your scraper’s IP address.

CAPTCHA Solving

Some websites use CAPTCHAs to prevent bot activity. Integrating with a CAPTCHA solving service can help your scraper bypass these challenges. However, consider the ethical implications of bypassing security measures.

Machine Learning for Data Extraction

In some cases, the structure of the HTML may be inconsistent or unpredictable. Machine learning techniques can be used to identify and extract data based on patterns and context rather than relying solely on HTML tags and attributes. [See also: Machine Learning for Web Scraping]

Example C++ Web Scraping Project Outline

Here’s a basic outline for a C++ web scraping project:

- Project Setup: Create a new C++ project and install the necessary libraries (e.g., libcurl, Gumbo Parser).

- URL Fetching: Use libcurl to fetch the HTML content of the target web page.

- HTML Parsing: Use Gumbo Parser to parse the HTML content and create a parse tree.

- Data Extraction: Traverse the parse tree to extract the desired data elements using CSS selectors or manual tree traversal.

- Data Storage: Store the extracted data in a suitable format (e.g., CSV file, database).

- Error Handling: Implement error handling to gracefully handle network errors and parsing errors.

- Rate Limiting: Implement rate limiting to avoid overloading the server.

- Testing: Thoroughly test your scraper to ensure it extracts the correct data and handles errors gracefully.

Conclusion

C++ web scraping offers a powerful and efficient way to extract data from websites, particularly when performance and resource control are crucial. By leveraging libraries like libcurl and Gumbo Parser, developers can build robust and scalable web scrapers. Remember to adhere to best practices and ethical considerations to ensure your scraping activities are responsible and sustainable. While Python may be the more common choice, C++ presents an appealing alternative in situations demanding speed and precision. This guide provides a solid foundation for building effective C++ web scraping solutions.