Concurrency vs Parallelism: Understanding the Key Differences

In the realm of computer science, concurrency and parallelism are often used interchangeably, leading to confusion. While both concepts deal with executing multiple tasks seemingly at the same time, they are fundamentally different. Understanding the nuances between concurrency vs parallelism is crucial for designing efficient and scalable software systems. This article delves into the core distinctions between these two paradigms, exploring their benefits, drawbacks, and practical applications.

Defining Concurrency

Concurrency, at its heart, is about managing multiple tasks within a single system. It focuses on structuring a program so that it can handle multiple tasks concurrently, even if only one task is actively executing at any given moment. Think of a chef juggling multiple orders in a restaurant. The chef isn’t necessarily preparing all the dishes simultaneously, but they are switching between them, making progress on each order in turn. This overlapping execution is what defines concurrency.

A key aspect of concurrency is that it doesn’t require multiple processing units. It can be achieved on a single-core processor by rapidly switching between tasks. This switching is typically managed by an operating system or a runtime environment, using techniques like time-slicing or cooperative multitasking. The goal is to give the illusion of simultaneous execution, improving responsiveness and resource utilization.

Defining Parallelism

Parallelism, on the other hand, is about executing multiple tasks truly simultaneously. It requires multiple processing units, such as multiple cores in a CPU or multiple machines in a distributed system. In our restaurant analogy, parallelism would be like having multiple chefs, each working on a different order at the same time. Each chef (processing unit) is independently executing a task, leading to a genuine speedup in overall execution time.

Parallelism leverages the available hardware resources to perform tasks in parallel. This can significantly reduce the time required to complete complex computations or process large datasets. However, parallelism also introduces challenges related to synchronization and communication between the parallel tasks. Proper coordination is essential to ensure that the tasks work together correctly and efficiently. Understanding concurrency vs parallelism is key to choosing the right approach.

Key Differences: Concurrency vs Parallelism

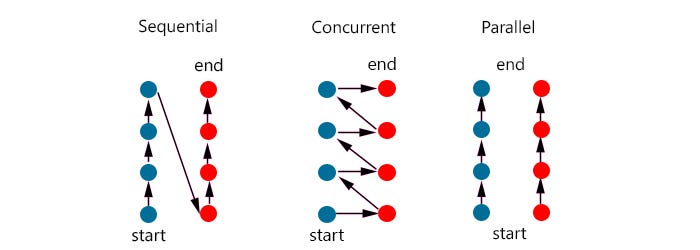

The core difference between concurrency vs parallelism lies in the actual execution. Here’s a breakdown:

- Execution: Concurrency is about managing multiple tasks, but not necessarily executing them simultaneously. Parallelism is about executing multiple tasks truly simultaneously.

- Hardware: Concurrency can be achieved on a single-core processor. Parallelism requires multiple processing units.

- Focus: Concurrency focuses on improving responsiveness and resource utilization. Parallelism focuses on reducing execution time.

- Complexity: Concurrency can be complex to manage, especially when dealing with shared resources. Parallelism introduces additional complexities related to synchronization and communication.

Illustrative Examples

To further clarify the concurrency vs parallelism distinction, consider these examples:

- Concurrency: A web server handling multiple client requests. The server switches between requests, processing each one in turn. This is concurrency because the server is managing multiple requests even if it’s only actively processing one at a time.

- Parallelism: A scientific simulation running on a cluster of computers. The simulation is divided into smaller tasks, and each task is executed on a different computer simultaneously. This is parallelism because the tasks are truly running in parallel, leveraging the power of multiple processors.

- Concurrency and Parallelism Combined: A video editing software using multiple threads to perform different tasks (e.g., decoding video, applying effects, encoding video). The software might use concurrency to manage these threads, switching between them as needed. If the computer has multiple cores, the threads can also run in parallel, further speeding up the editing process.

Benefits and Drawbacks

Concurrency

Benefits:

- Improved responsiveness: Applications remain responsive even when performing long-running tasks.

- Better resource utilization: Resources are used more efficiently by overlapping tasks.

- Simplified program structure: Complex tasks can be broken down into smaller, more manageable concurrent units.

Drawbacks:

- Increased complexity: Managing concurrent tasks can be challenging, especially when dealing with shared resources.

- Potential for race conditions and deadlocks: Concurrent access to shared resources can lead to unpredictable behavior.

- Debugging difficulties: Debugging concurrent programs can be more difficult than debugging sequential programs.

Parallelism

Benefits:

- Reduced execution time: Tasks are completed faster by executing them simultaneously.

- Improved scalability: Applications can handle larger workloads by leveraging multiple processing units.

- Ability to solve complex problems: Problems that are too large to be solved on a single processor can be solved using parallelism.

Drawbacks:

- Increased complexity: Designing and implementing parallel programs can be challenging.

- Synchronization overhead: Coordinating parallel tasks can introduce significant overhead.

- Communication costs: Communication between parallel tasks can be expensive, especially in distributed systems.

- Hardware limitations: The benefits of parallelism are limited by the number of available processing units.

Practical Applications

Both concurrency and parallelism are widely used in various applications. Here are some examples:

- Web servers: Handling multiple client requests concurrently.

- Databases: Processing multiple queries in parallel.

- Operating systems: Managing multiple processes and threads concurrently.

- Scientific simulations: Performing complex computations in parallel.

- Image and video processing: Processing large images and videos in parallel.

- Machine learning: Training machine learning models in parallel.

- Game development: Rendering graphics and simulating game physics in parallel.

Choosing Between Concurrency and Parallelism

The choice between concurrency and parallelism depends on the specific requirements of the application. If the goal is to improve responsiveness and resource utilization, concurrency may be the better choice. If the goal is to reduce execution time and improve scalability, parallelism may be more appropriate. In some cases, a combination of both techniques may be the best solution. Carefully consider the trade-offs between complexity, performance, and resource utilization when making this decision. Understanding the concurrency vs parallelism debate is critical for effective system design.

Factors to consider include:

- Available hardware: If multiple processing units are available, parallelism may be a viable option.

- Task dependencies: If tasks are highly dependent on each other, parallelism may be difficult to achieve.

- Synchronization requirements: If tasks require frequent synchronization, the overhead of parallelism may outweigh the benefits.

- Programming complexity: Parallel programming can be more complex than concurrent programming.

Conclusion

While concurrency and parallelism are often used interchangeably, they represent distinct concepts in computer science. Concurrency is about managing multiple tasks, while parallelism is about executing multiple tasks simultaneously. Understanding the nuances between concurrency vs parallelism is essential for designing efficient, scalable, and responsive software systems. By carefully considering the benefits and drawbacks of each approach, developers can choose the right strategy for their specific needs. Whether you’re building a web server, a scientific simulation, or a mobile app, a solid grasp of concurrency and parallelism will empower you to create better software.

[See also: Threading in Python]

[See also: Multiprocessing in Java]

[See also: Asynchronous Programming in JavaScript]