Scrape a Website with Python: A Comprehensive Guide

Web scraping, the process of automatically extracting data from websites, has become an indispensable tool for researchers, data analysts, and businesses alike. Python, with its rich ecosystem of libraries, provides a powerful and accessible platform for performing this task. This article provides a comprehensive guide on how to scrape a website with Python, covering everything from basic setup to advanced techniques.

Why Scrape Websites with Python?

Python’s popularity in web scraping stems from several key advantages:

- Ease of Use: Python’s syntax is relatively simple and easy to learn, making it accessible to beginners.

- Rich Libraries: Libraries like Beautiful Soup and Scrapy provide powerful tools for parsing HTML and navigating web pages.

- Extensive Community Support: A large and active community provides ample resources, tutorials, and support for Python web scraping.

- Cross-Platform Compatibility: Python runs seamlessly on various operating systems, including Windows, macOS, and Linux.

Essential Libraries for Web Scraping in Python

Several Python libraries are commonly used for web scraping. Here are two of the most popular:

Beautiful Soup

Beautiful Soup is a Python library for parsing HTML and XML documents. It creates a parse tree from page source code, which can be used to extract data easily. Beautiful Soup is known for its simplicity and flexibility, making it a great choice for smaller scraping projects.

Scrapy

Scrapy is a powerful and comprehensive web scraping framework. It provides a structured approach to building web scrapers, handling tasks such as request scheduling, data extraction, and data storage. Scrapy is well-suited for larger and more complex scraping projects.

Setting Up Your Environment

Before you begin, you’ll need to set up your Python environment and install the necessary libraries. Here’s how:

- Install Python: Download and install the latest version of Python from the official website (python.org).

- Install pip: Pip is Python’s package installer. It’s usually included with Python installations. Verify that pip is installed by running

pip --versionin your terminal. - Install Beautiful Soup: Use pip to install Beautiful Soup:

pip install beautifulsoup4 - Install Scrapy: Use pip to install Scrapy:

pip install scrapy

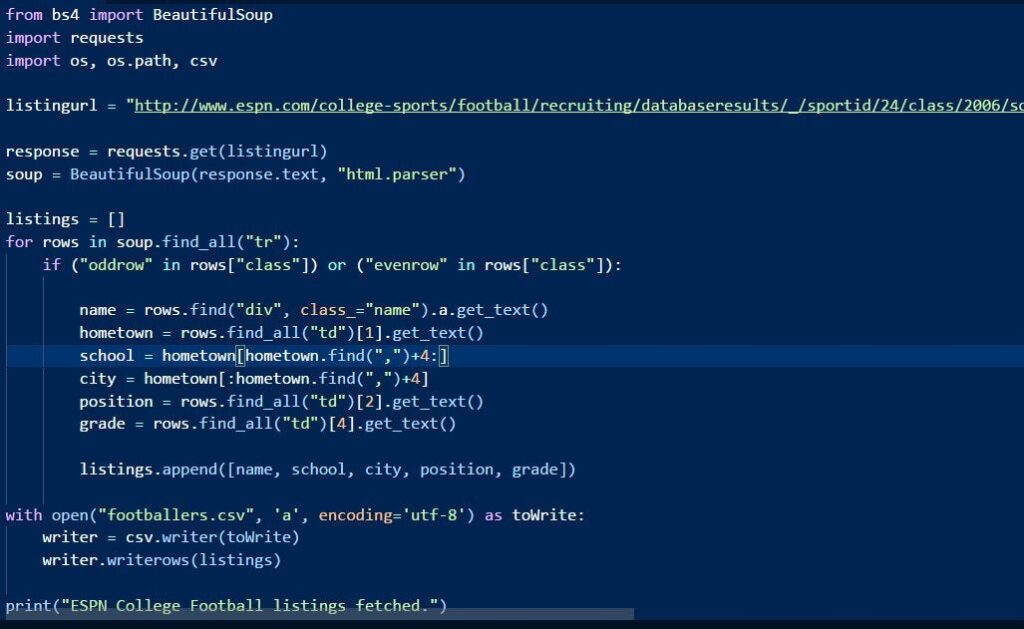

Basic Web Scraping with Beautiful Soup

Let’s start with a simple example of scraping data from a website using Beautiful Soup.

Making a Request

First, you need to make an HTTP request to the website you want to scrape. The requests library is commonly used for this purpose. If you don’t have it installed, you can install it using: pip install requests

Here’s an example of making a request:

import requests

url = "https://example.com"

response = requests.get(url)

if response.status_code == 200:

html_content = response.content

print("Request successful!")

else:

print(f"Request failed with status code: {response.status_code}")

Parsing HTML with Beautiful Soup

Once you have the HTML content, you can parse it using Beautiful Soup:

from bs4 import BeautifulSoup

soup = BeautifulSoup(html_content, 'html.parser')

# Now you can use soup to navigate the HTML tree and extract data

Extracting Data

Beautiful Soup provides methods for finding elements by tag name, class, ID, and other attributes. Here are a few examples:

- Find a single element:

soup.find('h1') - Find all elements with a specific tag:

soup.find_all('p') - Find an element by class:

soup.find('div', class_='content') - Get the text content of an element:

element.text - Get the value of an attribute:

element['href']

Here’s a complete example of scraping all the links from a webpage:

import requests

from bs4 import BeautifulSoup

url = "https://example.com"

response = requests.get(url)

if response.status_code == 200:

html_content = response.content

soup = BeautifulSoup(html_content, 'html.parser')

for link in soup.find_all('a'):

print(link.get('href'))

else:

print(f"Request failed with status code: {response.status_code}")

Advanced Web Scraping with Scrapy

Scrapy is a more advanced framework that provides a structured approach to web scraping. It’s particularly useful for larger and more complex projects.

Creating a Scrapy Project

To create a Scrapy project, use the following command:

scrapy startproject myproject

This will create a directory named myproject with the following structure:

myproject/

scrapy.cfg # deploy configuration file

myproject/

__init__.py

items.py # defines the data containers (items) that you'll be scraping

middlewares.py # defines the process of request and response

pipelines.py # defines the data processing pipelines

settings.py # project settings file

spiders/ # where the spiders live

__init__.py

Defining Items

Items are data containers that you’ll be using to store the scraped data. Define your items in items.py. For example:

import scrapy

class MyItem(scrapy.Item):

title = scrapy.Field()

link = scrapy.Field()

description = scrapy.Field()

Creating a Spider

Spiders are classes that define how to scrape a website with Python. Create a new spider in the spiders directory. For example:

import scrapy

from myproject.items import MyItem

class MySpider(scrapy.Spider):

name = "myspider"

start_urls = ["https://example.com"]

def parse(self, response):

for article in response.css('article'):

item = MyItem()

item['title'] = article.css('h2 a::text').get()

item['link'] = article.css('h2 a::attr(href)').get()

item['description'] = article.css('p::text').get()

yield item

Running the Spider

To run the spider, use the following command:

scrapy crawl myspider

This will start the spider and scrape data from the specified website. The scraped data can be exported to various formats, such as JSON, CSV, or XML.

Handling Dynamic Content

Many modern websites use JavaScript to dynamically generate content. Traditional web scraping techniques may not be able to extract this content. To handle dynamic content, you can use tools like Selenium or Puppeteer.

Selenium

Selenium is a browser automation tool that can be used to control a web browser and interact with web pages. It’s particularly useful for scraping dynamic content.

To use Selenium, you’ll need to install it and download a WebDriver for your browser. Here’s how:

- Install Selenium:

pip install selenium - Download WebDriver: Download the WebDriver for your browser (e.g., ChromeDriver for Chrome) and place it in your system’s PATH.

Here’s an example of using Selenium to scrape a website with Python that uses JavaScript:

from selenium import webdriver

# Initialize the WebDriver (e.g., ChromeDriver)

driver = webdriver.Chrome()

# Load the webpage

driver.get("https://example.com")

# Wait for the dynamic content to load (optional)

# driver.implicitly_wait(10)

# Get the HTML content

html_content = driver.page_source

# Parse the HTML with Beautiful Soup

from bs4 import BeautifulSoup

soup = BeautifulSoup(html_content, 'html.parser')

# Extract the data

# ...

# Close the browser

driver.quit()

Best Practices for Web Scraping

When scrape a website with Python, it’s important to follow best practices to avoid being blocked or causing problems for the website.

- Respect the

robots.txtfile: Therobots.txtfile specifies which parts of the website should not be scraped. Always check this file before scraping. - Limit your request rate: Sending too many requests in a short period of time can overload the website’s server. Use delays between requests to avoid this.

- Use headers: Set appropriate headers in your requests to identify yourself as a legitimate user.

- Handle errors gracefully: Implement error handling to deal with network issues, server errors, and other unexpected problems.

- Store data responsibly: Store the scraped data in a structured format and respect the website’s terms of service and privacy policy.

- Consider using proxies: If you need to make a large number of requests, consider using proxies to avoid being blocked.

Common Challenges and Solutions

Web scraping can be challenging, and you may encounter various issues. Here are some common challenges and solutions:

- Website Structure Changes: Websites frequently change their structure, which can break your scraper. Monitor your scraper regularly and update it as needed.

- IP Blocking: Websites may block your IP address if they detect excessive scraping activity. Use proxies or rotate your IP address to avoid this.

- CAPTCHAs: Websites may use CAPTCHAs to prevent automated scraping. Use CAPTCHA solving services or implement techniques to bypass CAPTCHAs.

- Dynamic Content: As discussed earlier, use tools like Selenium or Puppeteer to handle dynamic content.

Legal and Ethical Considerations

Web scraping involves legal and ethical considerations. It’s important to be aware of these considerations and to act responsibly.

- Terms of Service: Review the website’s terms of service to ensure that scraping is permitted.

- Copyright: Respect copyright laws and avoid scraping copyrighted material without permission.

- Privacy: Protect the privacy of individuals and avoid scraping personal information without consent.

- Impact on Website: Avoid overloading the website’s server and causing performance issues.

Conclusion

Web scraping with Python is a powerful technique for extracting data from websites. By using libraries like Beautiful Soup and Scrapy, you can automate the process of collecting data and gain valuable insights. Remember to follow best practices, handle dynamic content effectively, and respect legal and ethical considerations. This comprehensive guide provides a solid foundation for you to scrape a website with Python efficiently and responsibly. [See also: Python Web Scraping Tutorial] [See also: Scrapy vs. Beautiful Soup] [See also: Web Scraping Best Practices]